Fusing LiDAR and Photogrammetry for Accurate 3D Data: A Hybrid Approach

Introduction

The rapid advancement of 3D mapping technologies has affected various industries, from urban planning and autonomous navigation to augmented reality and environmental monitoring. Among the most widely used technologies in 3D data acquisition are LiDAR (Light Detection and Ranging) and photogrammetry. Both methods generate point clouds, which are digital representations of physical environments.

LiDAR is a remote sensing technique that uses laser pulses to measure distances with high geometric accuracy. It can capture detailed spatial structures, making it very useful for terrain mapping, self-driving cars, and geospatial applications. The precise measurements provided by LiDAR make it a reliable tool for applications requiring accurate depth and structural information.

On the other hand, photogrammetry reconstructs 3D models by analyzing multiple 2D images captured from different angles. It is highly effective in preserving texture details and is widely used in architecture and cultural heritage documentation. Unlike LiDAR, photogrammetry provides color and high-resolution textures, but its accuracy is often affected by camera quality, lighting conditions, and perspective distortions. While LiDAR provides high precision in spatial measurements, it lacks visual texture information, making it less effective for applications where detailed textures and visual realism are crucial. Additionally, LiDAR systems are often expensive and require specialized hardware, which limits their accessibility in consumer-level applications.

A Hybrid Approach

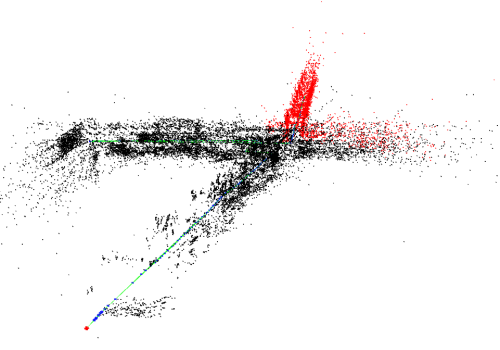

By combining the geometric accuracy of LiDAR with the detailed textures and point clouds of photogrammetry, we have found that it is possible to generate high-quality point clouds that are both visually realistic and spatially precise. Through our research, we have developed and tested fusion techniques that enhance structural continuity and minimize artifacts. By applying convex hull clipping methods, we have improved object retention to reduce noise, leading to cleaner and more reliable 3D reconstructions. Additionally, we have evaluated the effectiveness of various photogrammetry approaches in urban environments, analyzing object size preservation. This hybrid approach is especially valuable for applications that demand both high accuracy and rich visual textures, such as augmented reality, autonomous systems, and urban modeling. By integrating machine learning algorithms with traditional alignment techniques, we can improve the efficiency, scalability, and overall quality of fused point clouds, enabling more advanced applications.

Find our paper here: Fusing LiDAR and Photogrammetry for Accurate 3D Data: A Hybrid Approach

Point Cloud Accuracy

Accuracy is one of the most important factors in 3D mapping applications. LiDAR is highly accurate due to its direct measurement technique. It is effective for capturing precise elevation data, even in low-light conditions or complex terrains. This makes LiDAR a preferred choice for applications such as topographic surveys or autonomous vehicles. Photogrammetry determines depth by examining 2D images. While modern photogrammetry techniques such as Structure from Motion (SfM) can achieve high levels of accuracy, they are inherently less precise than LiDAR, as their accuracy depends on image resolution, camera calibration, lighting conditions, and the scale is relative.

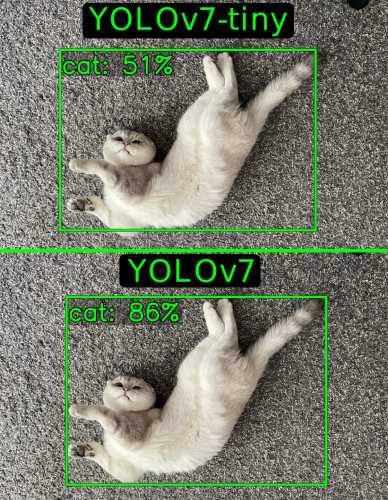

NeRF vs. 3D Gaussian Splatting: Which Method Performs Better for Point Cloud Generation?

Neural Radiance Fields (NeRF) and 3D Gaussian Splatting (3DGS) are AI-based methods for point cloud generation and texture mapping. Both methods can improve photogrammetry-based models but differ in computational performance and visual output. Key Takeaways: • NeRF produces highly detailed, realistic textures but is computationally expensive. • 3D Gaussian Splatting is significantly faster, making it more suitable for when faster inference is needed, while still achieving high fidelity. When to Use NeRF vs. 3DGS • Use NeRF when higher quality is needed. • Use 3DGS when speed and efficiency are priorities (e.g., large-scale reconstruction).

Image 1. NeRF vs 3DGS examples (nerfstudio).

Real-World Applications of LiDAR-Photogrammetry Fusion

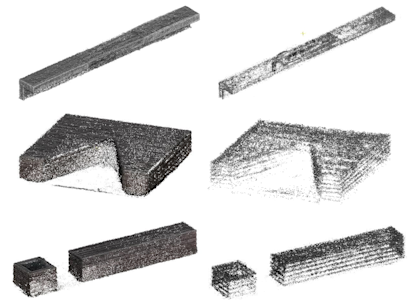

The fusion of LiDAR and photogrammetry has applications across multiple industries, including urban planning, geographic information systems, autonomous vehicles, and augmented reality. By combining high geometric accuracy (LiDAR) with rich visual detail (photogrammetry), this hybrid approach is transforming how 3D data is collected, analyzed, and visualized. A convex hull clipping method has been also integrated for higher success rates in retaining key object details while removing extraneous points.

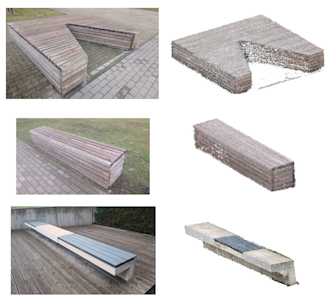

Urban Planning and Smart Cities Urban planners and architects use 3D city models to design and develop sustainable infrastructure. The combination of LiDAR and photogrammetry enables high-precision digital twins of cities, allowing planners to analyze terrain and elevation data to plan road networks, buildings, and public spaces. In our research we have analyzed urban objects, specifically, benches in urban environment using various photogrammetry methods. We have found the dimensions to differ less than 6%, when compared to real object measurements, indicating a high level of size preservation.

Image 2. Examples of urban objects produced with our hybrid methodology.

Autonomous Vehicles and Robotics Self-driving cars and robots rely on precise 3D environmental mapping for navigation, obstacle avoidance, and object detection. The fusion of LiDAR and photogrammetry enhances perception by providing accurate depth information for autonomous navigation because of improved object recognition by combining spatial and texture data.

Augmented Reality (AR) and Virtual Reality (VR) AR and VR applications require high-quality 3D models for immersive experiences in gaming, training, and visualization. By fusing LiDAR and photogrammetry, developers can create highly realistic 3D environments for VR simulations or accurate AR overlays for architecture, retail, and tourism applications. Retail and e-commerce could use AR-based LiDAR-photogrammetry models to allow customers to visualize furniture, clothing, and accessories in real-world settings. Similarly, heritage preservation projects use hybrid 3D models to digitally restore historic landmarks.

Construction and Infrastructure Monitoring Construction firms use 3D mapping to track progress, detect defects, and improve project planning. LiDAR and photogrammetry fusion enables accurate site cans for elements of bridge, road, and tunnels.

Conclusion

The fusion of LiDAR and photogrammetry is changing the ways of 3D mapping and spatial analysis, impacting industries from urban development to robotics. By fusing these two techniques, we are able bridge the gap between accuracy and visual fidelity. This hybrid approach enhances terrain mapping, urban planning, and real-time simulations, providing more complete and reliable 3D representations for diverse industries. As machine learning and computational techniques improve, the integration of LiDAR and photogrammetry will become even more integrated, unlocking new possibilities in fields ranging from autonomous robotics to real-time 3D visualization.

Other insights